The evolution of generative and analytical artificial intelligence systems proceeds at an increasingly rapid pace. Writing texts, summarizing them, analyzing images, are all operations with which the most advanced AI models, such as ChatGPt-4, are now at ease. But what happens when these functions are combined and put at the service of disabled people’s daily lives? This is shown by Lucy Edwards, a blind English girl who illustrates the functioning of the Be My Eyes application on her Instagram profile. Initially created to put blind people in contact with volunteers who remotely help them in everyday operations, the software has started to rely on ChatGPT for the analysis and advice it provides to those who use it.

Service catalogue

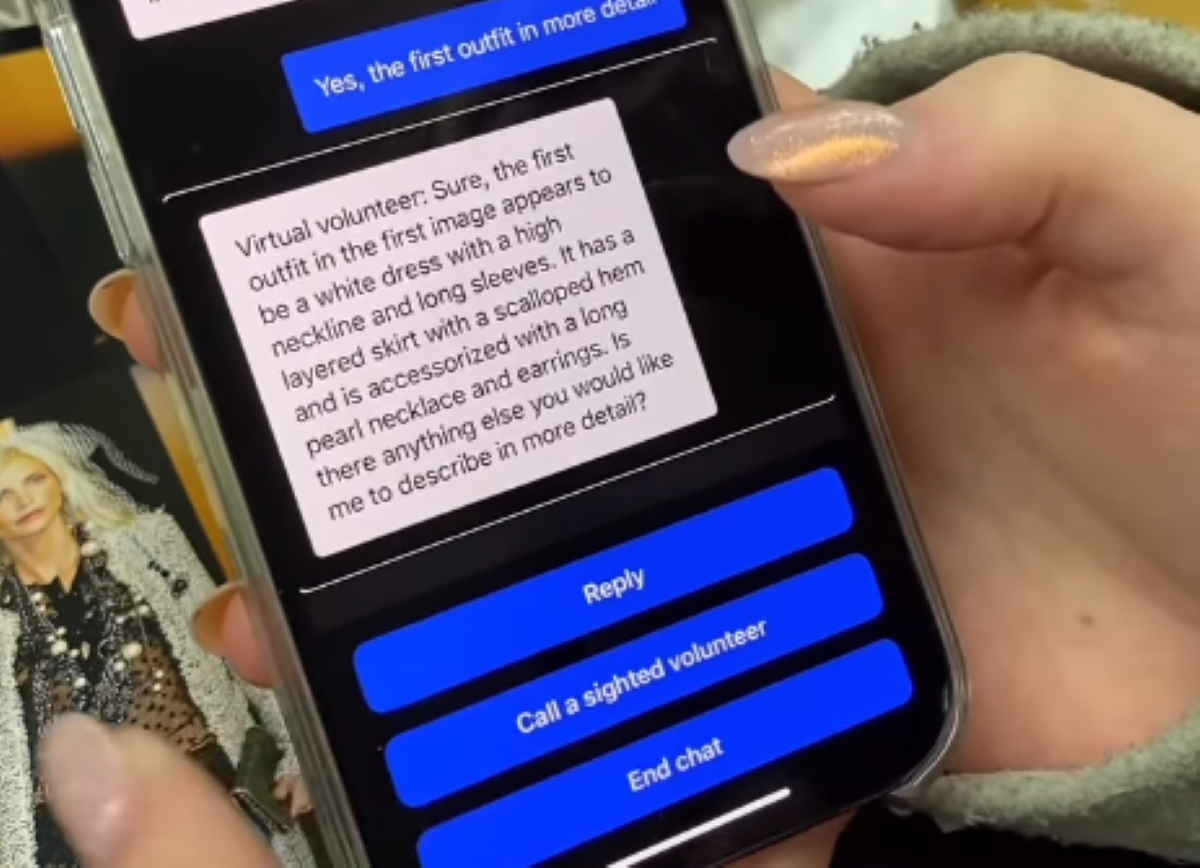

Complicated technologies behind simple operation. In the reel published by Edwards you can see her in the gym. She says she intends to continue her training with a jog. Which is why she needs to figure out where the treadmills are. She picks up the phone, snaps a photo of the room, and after a few moments the application starts reading a text created on the spot with directions to reach them. Once there, Edwards has to figure out which cars are free. Same process: she takes a picture, and Be My Eyes does what she promises. She shows her how to get there conveyor belt free. Other situation, similar operation. In this case, Edwards is in an oriental goods store, where she needs to figure out which product to buy. Holding a bottle in her hand, she frames the label with Be My Eyes, and her virtual assistant translates it, explaining what the product contains. The application is able to be even more detailed, as in the case when the girl uses it on a Chanel catalog, and the recognition system precisely describes the clothes worn by the models.

Read on about Open