Listen to the audio version of the article

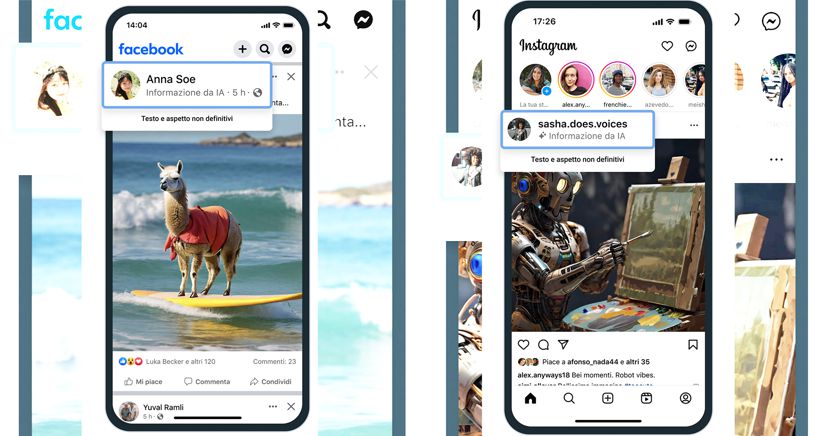

The AI industry is still behind in building common standards to identify images, video and audio generated by AI, but something is moving in this direction. Meta has already started labeling images created with its generator, Imagine AI, on Facebook, Instagram and Threads as “Information from AI”. The next step for the company will be to help people understand when the photorealistic content they are seeing was created with AI by detecting and labeling, as much as possible, images generated by artificial intelligence services from third-party providers such as Google , OpenAI, Microsoft, Adobe, Midjourney and Shutterstock.

Push common standards

Measures that according to Meta are essential to push the technology industry to create common standards, as the media generated by AI becomes increasingly difficult to distinguish from reality. “We are working with industry partners to align on common technical standards that can signal when content has been created using AI. The ability to detect these signals will allow us to apply labels to AI-generated images that users post on Facebook, Instagram and Threads by applying labels in all languages supported by each app. This approach will be adopted throughout next year, during which important elections will be held around the world,” Nick Clegg, President of Global Affairs at Meta, said in the press note. In this regard, Meta says it is developing tools that can to identify invisible marks of AI-generated information so that they can label images created by other AI generation tools, “as they implement their plans to insert metadata to images created by their tools.” According to Meta these “standard” indicators will be able to detect the artificiality of images automatically. “We are working hard to develop classifiers that help us automatically identify AI-generated content, even those that do not have invisible marks. At the same time, we are looking at ways to make it more difficult to remove or alter invisible watermarks,” Meta said.

The challenge for deepfake videos

Debunking fake images is one thing, but when it comes to AI-generated video and audio, Clegg suggests that it is generally still too difficult to detect these types of fakes, as tagging and watermarking have not yet been adopted on a sufficient scale for detection tools can do a good job. “It is not yet possible to identify all AI-generated content, as there are ways in which people can eliminate invisible markers – explains Clegg – This is why we are evaluating a number of options, working hard to develop classifiers that help us automatically identify AI-generated content, even those that do not have invisible watermarks, and at the same time, we are looking for ways to make it more difficult to remove or alter invisible watermarks.”

The fight against deepfakes will also involve users

To combat the spread of deepfakes and audio, Ai Meta also intends to hold users accountable by requiring those who post “photorealistic” Ai videos or “realistic” audio to inform them that the content is artificial. Not only that, the contents generated by the AI can also be verified by the independent fact-checking partners with which Meta collaborates, and possibly flagged in order to give people accurate information when they come across content of dubious origin. Use of Language Models of Large Content ModerationClegg also discussed Meta’s use of generative AI as a tool to help enforce its policies. “We are using LLMs to remove content in certain circumstances. This way, our reviewers can focus on content that is most likely to violate our rules.”